Hey everyone,

Here is how AI policy disasters actually happen.

A junior employee pastes the company's entire client database into ChatGPT to write a segmentation report. A sales manager uses AI to generate a proposal and it includes a completely fabricated case study attributed to a real client. A developer debugs code containing proprietary algorithms and the conversation is stored on OpenAI's servers.

None of these people did anything malicious. None of them thought they were doing anything wrong. They were using the tools available to them to do their jobs faster.

The organisation had no policy telling them not to.

Why Most AI Policies Fail Before They Start

Most policies are written by legal, for legal. The result is a document nobody reads, written in language nobody understands, covering situations nobody encounters.

A working AI policy covers the most important risks in plain language with specific examples. Here is how to build one.

Explore Sponsor #01

Ghost: Free Postgres For Agents

Agents are desperate for ephemeral databases.

They spin up projects, fork environments, test ideas, and tear them down. Over and over. But every database on the market was designed for humans who provision once and stick around. Agents don't work that way.

Ghost is a database built for agents. Unlimited databases, unlimited forks, 1 TB of storage, and 100 compute hours per month. All free. Try it here.

Return to Article

The Seven Components Every AI Policy Needs

Component 1 — Purpose and Principles

Start with three principles that govern everything else.

Responsibility — every AI-assisted output has a human owner accountable for its quality

Confidentiality — treat AI tools like any external service provider and never share what you would not share externally

Transparency — be honest about AI use with clients where relevant and with colleagues always

If employees understand the principles they can make sensible judgment calls in situations the policy does not explicitly cover.

Component 2 — Acceptable Use

Structure this in three clear buckets.

Category | Examples |

|---|---|

Permitted | Drafting content with human review, research, code writing with testing, brainstorming |

Conditional | Client documents with senior review, client data with approved tools only, legal content with professional sign-off |

Prohibited | Fake testimonials, processing personal data without approval, autonomous decisions on consequential matters |

The grey area test: would you be comfortable if this output was attributed entirely to you with no mention of AI? If yes, proceed. If no, ask first.

Component 3 — Data Handling

This is where most AI incidents occur. Employees do not think of pasting information into ChatGPT as sharing data externally. It is.

Level 1 — Public information — any approved tool

Level 2 — Internal information — approved tools with business privacy settings

Level 3 — Confidential — enterprise tools with data processing agreements only

Level 4 — Restricted — no AI tool without explicit sign-off

The practical test before entering any information: would you paste this into an email to an external service provider you just signed up for online? If no, do not proceed.

Component 4 — Output Verification

AI hallucinations are not edge cases. They are a systematic feature of how these models work. Every output needs human verification before use.

Factual claims — verify against primary sources, not the AI's citation

Code — test before deployment, peer review for sensitive functions

Client-facing documents — complete a verification checklist before sending

Legal content — qualified professional review required, no exceptions

Component 5 — Attribution and Transparency

Disclose AI involvement when AI substantially contributed to the analysis, recommendations, or conclusions of a deliverable.

A simple footer works: "This document was prepared with AI assistance. All analysis has been reviewed and verified by [name]."

Attribution is not an apology. It is a professional disclosure that builds trust.

Component 6 — Escalation

Report immediately when confidential data was entered into an unapproved tool, when AI content with material errors was sent to a client, or when AI output drove a significant decision without verification.

The culture that makes this work: employees need to feel safe reporting near-misses. Make it explicit in the policy. We would rather know about a near-miss than not know about a serious incident.

Component 7 — Governance

Name a policy owner. Set a review schedule. Build a mechanism for employees to flag situations the policy does not cover. A policy without a named owner gets outdated. A policy without a review schedule gets ignored.

Explore Sponsor #02

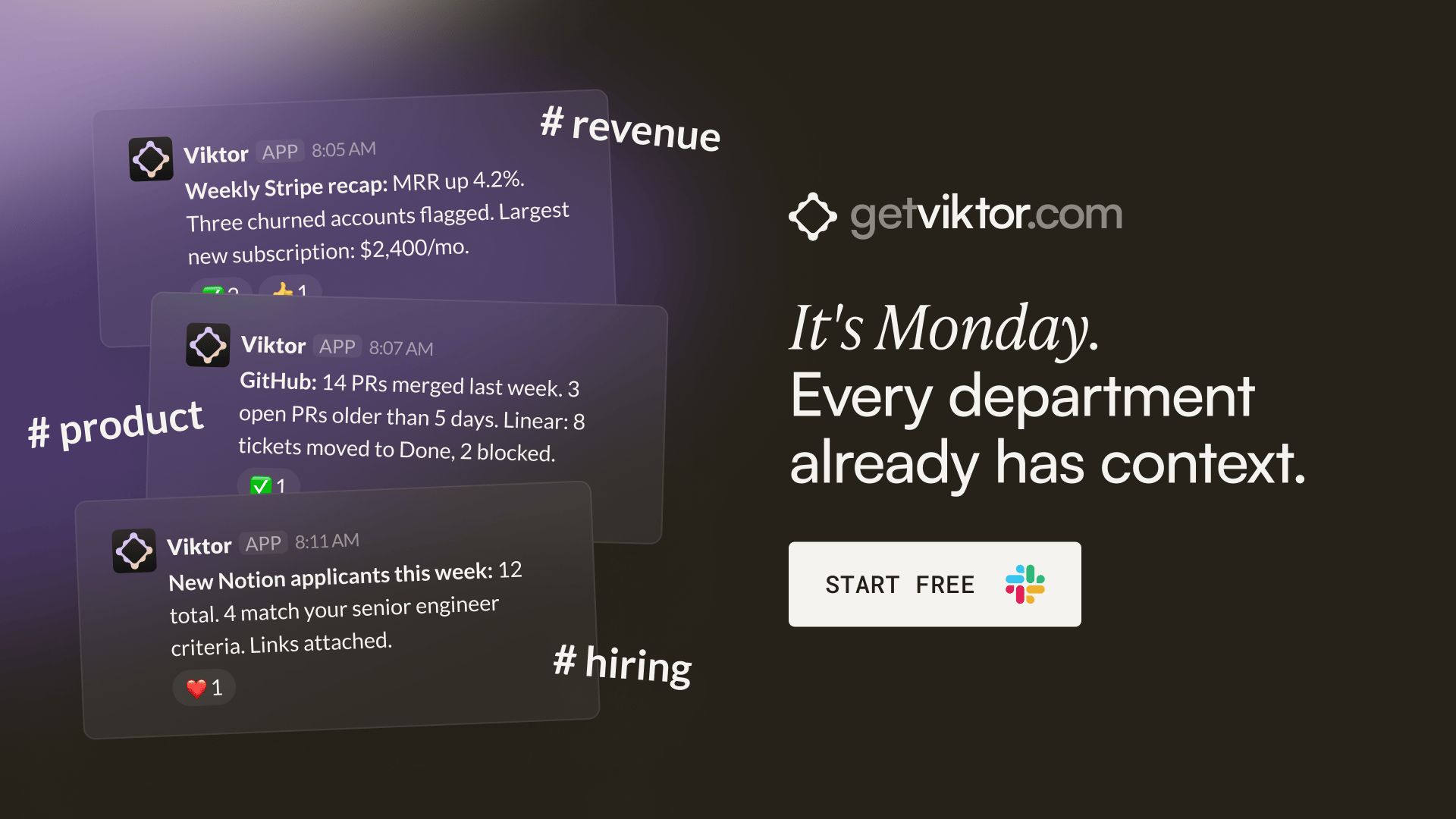

It's Monday. Every department already has context. Nobody prepped anything.

Your CFO opens Slack. There's a weekly Stripe revenue recap in #finance with a churned-accounts flag and a net-new breakdown. She didn't ask for it.

Your head of product opens Slack. There's a GitHub summary in private channel: PRs merged, PRs stale, Linear tickets that moved. He didn't ask for it.

Your marketing lead opens Slack. There's a Google Ads performance comparison in private channel, with a note: "Meta CPA crept up 18% this week. Might be worth pausing the broad match campaign." She didn't ask for it either.

All-hands at 10am. Everyone already knows the numbers. The meeting is about decisions, not catch-up.

That's what happens when one colleague works across every tool your company uses. Not one department's assistant. The whole company's coworker.

Viktor lives in Slack. Top 5 on Product Hunt, 130 comments. SOC 2 certified. Your data never trains models.

"Not only have we caught up on several months of work, we are automating manual tasks and expanding our operations to things previously not possible at scale." - Jesse Guarino, Director, Torque King 4x4

Go Back to the Article

The Five-Minute Version Your Team Will Actually Remember

Use AI. It makes us better. Do not share client data with consumer AI tools. Always verify outputs before they reach clients. Tell someone if something goes wrong. When in doubt, ask.

That is the policy in practice. The full document is the reference.

Catch you next time!

P.S. Forward this to anyone who manages a team using AI. They need it before they need it.